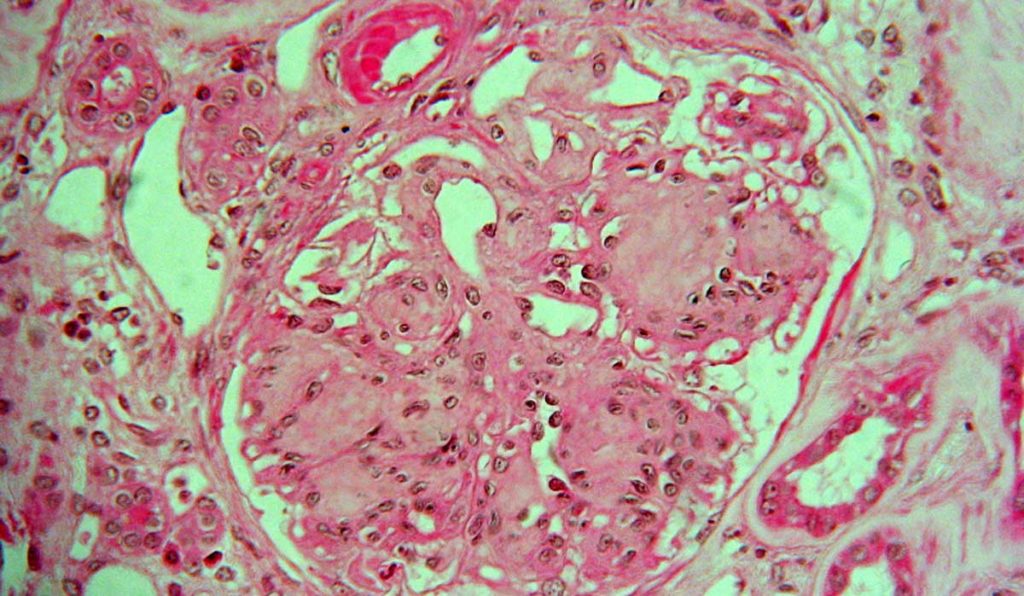

Diabetic nephropathy (DN) is defined by elevated urine albumin excretion or reduced glomerular filtration rate (GFR), or both. This serious complication causes destructive scarring in 20 to 40 percent of all diabetics, with minimal tissue regeneration possible. While DN may be diagnosed clinically, pathology is often needed to confirm the diagnosis and establish the severity of the injury.

“In addition to making the correct diagnosis of diabetic nephropathy, we want to be able to assess the severity of the injury.”

Pathologists usually classify DN based on a visual assessment of glomerular pathology using immunofluorescence microscopy and electron microscopy. Although diagnostic guidelines have been well established, scoring of severity of the lesions may vary among pathologists.

“All of this involves pathologists studying slides; looking at the glomeruli in a biopsy to determine if they are normal or abnormal,” said Agnes Fogo, M.D., the John L. Shapiro Chair of Pathology at Vanderbilt University Medical Center. “In addition to making the correct diagnosis of diabetic nephropathy, we want to be able to assess the severity of the injury.”

Recently, Fogo was a co-author on a study, published in the Journal of the American Society of Nephrology and led by Pinaki Sarder, Ph.D., of the University of Buffalo, to determine if an artificial intelligence (AI)-driven process called computational segmentation can be used to improve classification of severity of diabetic glomerulosclerosis.

Machine Learning to Classify Samples

The researchers combined traditional image analysis with a novel machine learning model in a recent study published in the Journal of the American Society of Nephrology. Their goal was to determine if the latter could more efficiently capture important structures and minimize manual effort. Slide images from 54 patients with DN were used to train the machine learning algorithms.

To computationally quantify complex glomerular structure, the investigators reduced it to three components: nuclei, capillary lumina and Bowman spaces, and periodic acid-Schiff positive structures. They detected glomerular boundaries and nuclei from whole slide images using convolutional neural networks, and the remaining glomerular structures using an unsupervised technique developed expressly for the study. They also defined a set of digital features quantifying the structural progression of DN, and a recurrent network architecture to process these features into a classification.

There was substantial agreement between the digital classifications and those made by three senior pathologists, suggesting that computational approaches can be comparable to traditional human visual classification.

“This study is the first to demonstrate that we can employ computational tools to conduct tasks that renal pathologists do; thus, this approach can improve precision in a clinical workflow,” Sarder said.

Expanding AI in Renal Disease

“In the future, we hope to train the computer to discern things the human eye can’t discern,” Fogo said. “Are there any identifying characteristics in patients who have different outcomes?” She also hopes that the machine learning model can be taught to classify other, more complex renal diseases such as lupus nephritis, rejection in kidney transplants, and kidney cancers.

“We’ll still need experts to integrate all the existing data. But if you could have the computer mark the area of concern… it could save time in diagnosis and potentially lead to recognition of specific abnormalities.”

While machine learning may advance diagnosis in devastating kidney disease, Fogo thinks there will always be a need for expert pathology. “If you want the instrument to do what pathologists do,” she said, “you must have a well-developed gold standard and input huge, complex data sets to define archetypes that are potentially meaningful.”

“We’ll still need experts to integrate all the existing data. But if you could have the computer mark the area of concern and have your eye drawn to that, it could save time in diagnosis and potentially lead to recognition of specific abnormalities to support precise interventions.”